Every large organization wants to use AI. Boards ask about it. CIOs budget for it. Architects are asked to “embed intelligence everywhere”. Yet in early 2026, the reality is more nuanced.

AI is powerful. AI is accelerating. AI is still not deterministic enough to be trusted with direct manipulation of core business data at scale.

Hallucinations still exist. Probabilistic outputs still exist. Silent failure modes still exist. In enterprise environments, this is not a theoretical risk. It is an operational risk.

This is why the question is no longer “Can we use AI?”

The real question is “Where can AI safely create value without compromising control, integrity or compliance?”

The First Constraint. AI Is Not Ready to Own Business Data

Modern LLMs are highly intelligent but they are not authoritative systems of record.

Using AI to directly edit financial data, master data, HR records or transactional systems introduces unacceptable risk. Even a low hallucination rate becomes critical when multiplied by enterprise scale.

For regulated environments, critical infrastructure or customer-sensitive platforms this is a hard stop.

At Lynxmind, our baseline assumption is simple.

AI should advise, analyze, correlate, explain and orchestrate.

AI should not autonomously mutate business data.

This principle drives every architecture decision we make.

Where AI Creates Immediate Value. Supporting Services, Not Core Records

AI delivers measurable impact when positioned in supporting layers of the enterprise architecture, not at the core.

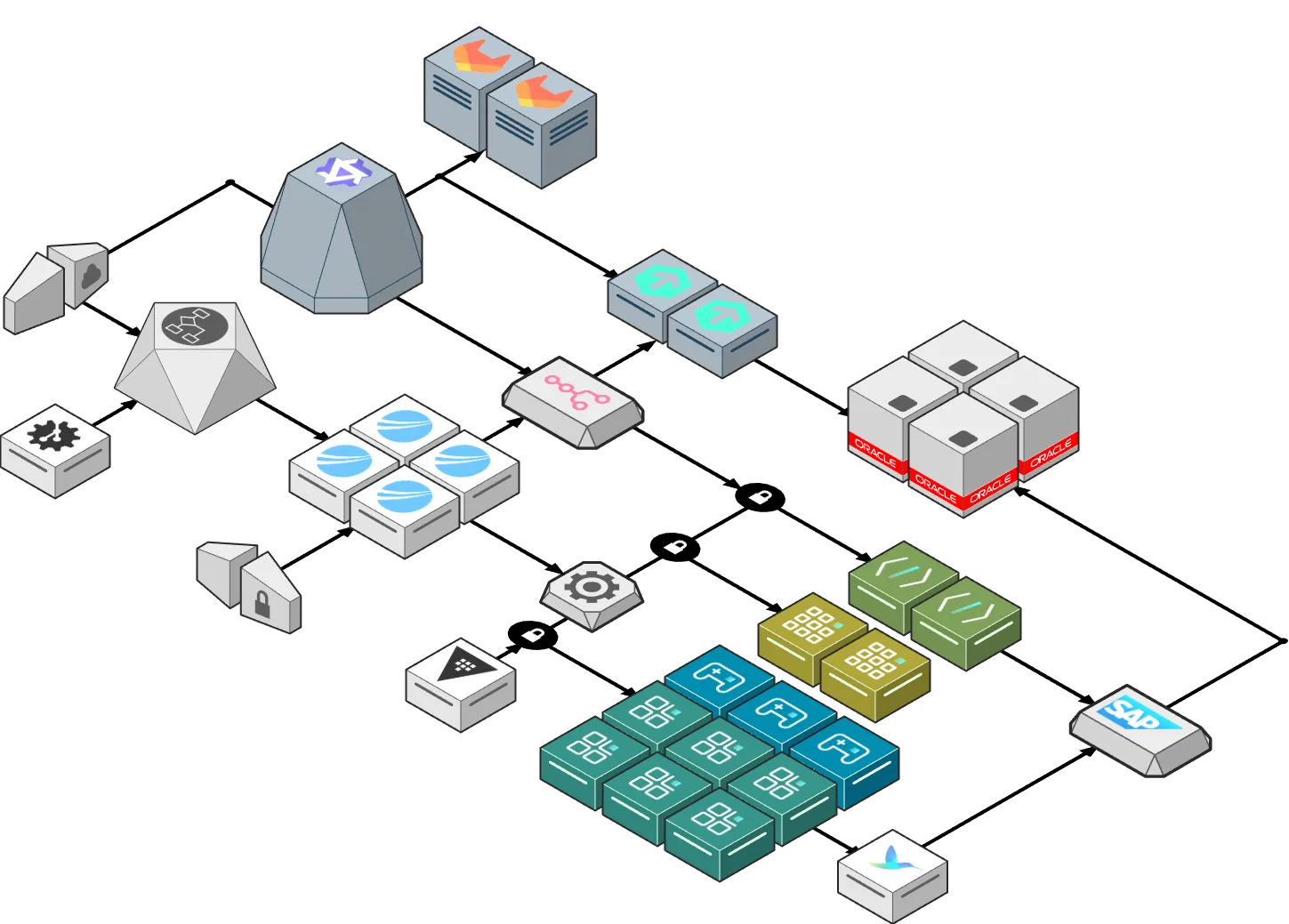

Looking at the diagram above, the pattern is clear.

AI sits alongside platforms.

AI observes systems.

AI reasons over signals.

AI triggers workflows.

AI remains inside guardrails.

Intelligent Monitoring and Root Cause Analysis

One concrete example is Guardian.

Instead of replacing operational teams, AI augments them.

Guardian introduces intelligent monitorization capable of delivering complete root cause analysis from alert to actionable insight in under 30 seconds.

Multiple specialized AI agents collaborate using domain expertise across SAP, infrastructure, databases and middleware.

This is a high-impact use case because it operates on signals and telemetry, not business records.

Alerts are analyzed. Dependencies are correlated. Probable causes are ranked.

Human operators remain in control of decisions and remediation.

This is AI accelerating operational intelligence without crossing trust boundaries.

The Second Constraint. Data Sovereignty Is Non-Negotiable

Another enterprise reality is data control.

Public AI platforms are not acceptable for most large organizations holding client data, regulated data, or intellectual property.

This is why we invested early in private, on-prem AI platforms.

Our journey with lynxserver was driven by three non-negotiable requirements:

- Zero variable costs. No tokens. No per-request billing.

- Full data privacy. Prompts, files, and outputs never leave enterprise infrastructure.

- Architectural sovereignty. Models, workflows and integrations remain under customer control.

This is not an optimization. It is a prerequisite.

Without private AI, most enterprise use cases simply cannot move beyond experimentation.

Automation with Guardrails. AI as an Orchestration Trigger

Another class of high-value use cases sits at the intersection of AI and automation.

By integrating internal LLMs with orchestration platforms such as n8n, enterprises can enable intelligent workflows without touching core data.

Examples include:

- Credential lifecycle handling

- User provisioning and de-provisioning

- Access requests and approvals

- Operational runbooks triggered by contextual analysis

In this model, AI does not execute blindly.

AI proposes actions.

Automation pipelines execute pre-approved deterministic steps.

Failures are reprocessed safely without business data corruption.

This pattern scales extremely well in large IT organizations.

Why Background Matters. Middleware, SAP, Platform Engineering

The differentiator is not the model. The differentiator is context.

Lynxmind’s background in middleware integration, SAP ecosystems, platform engineering and web development is what allows us to place AI correctly inside large and complex IT landscapes.

We understand how systems interact.

We understand where data originates.

We understand which layers are safe for probabilistic reasoning and which are not.

AI only delivers value when it respects existing orchestration, governance and operational reality.

This is why we do not “add AI everywhere”.

We engineer AI where it belongs.

The Real Enterprise AI Strategy

Enterprise AI in 2026 is not about replacing systems. It is about augmenting intelligence without breaking trust.

- AI observes.

- AI explains.

- AI correlates.

- AI accelerates decision-making.

Guardrails remain intact.

Humans remain accountable.

Systems of record remain deterministic.

That is how AI moves from hype to production at enterprise scale.

And that is how we approach AI at Lynxmind.