In October we shared Lynxserver, our first private AI server. It proved a principle: at Lynxmind, AI lives on metal we own. This is the production version of that idea.

The Build

Two NVIDIA RTX PRO 6000 Blackwell Workstation Edition GPUs in a single chassis.

Assembled in-house. Tuned in-house. Owned in-house.

The Model

At the time of this post we are running Gemma 4 (31b), Google’s open-weight model family, locally on the server. With 192 GB of VRAM, the model loads in high precision, runs with full context windows and serves the entire team in parallel.

Real-World Performance

Numbers from our current production load:

~60 concurrent sessions256k context input per session~52 tokens/second per user

That is half the entire Lynxmind team, consultants, agents, automations running off a single machine, in parallel, without queueing. It is like having a private Claude Sonnet 4.5 just for your enterprise.

The Stack: Open WebUI + LiteLLM

Two pieces sit on top of the GPUs:

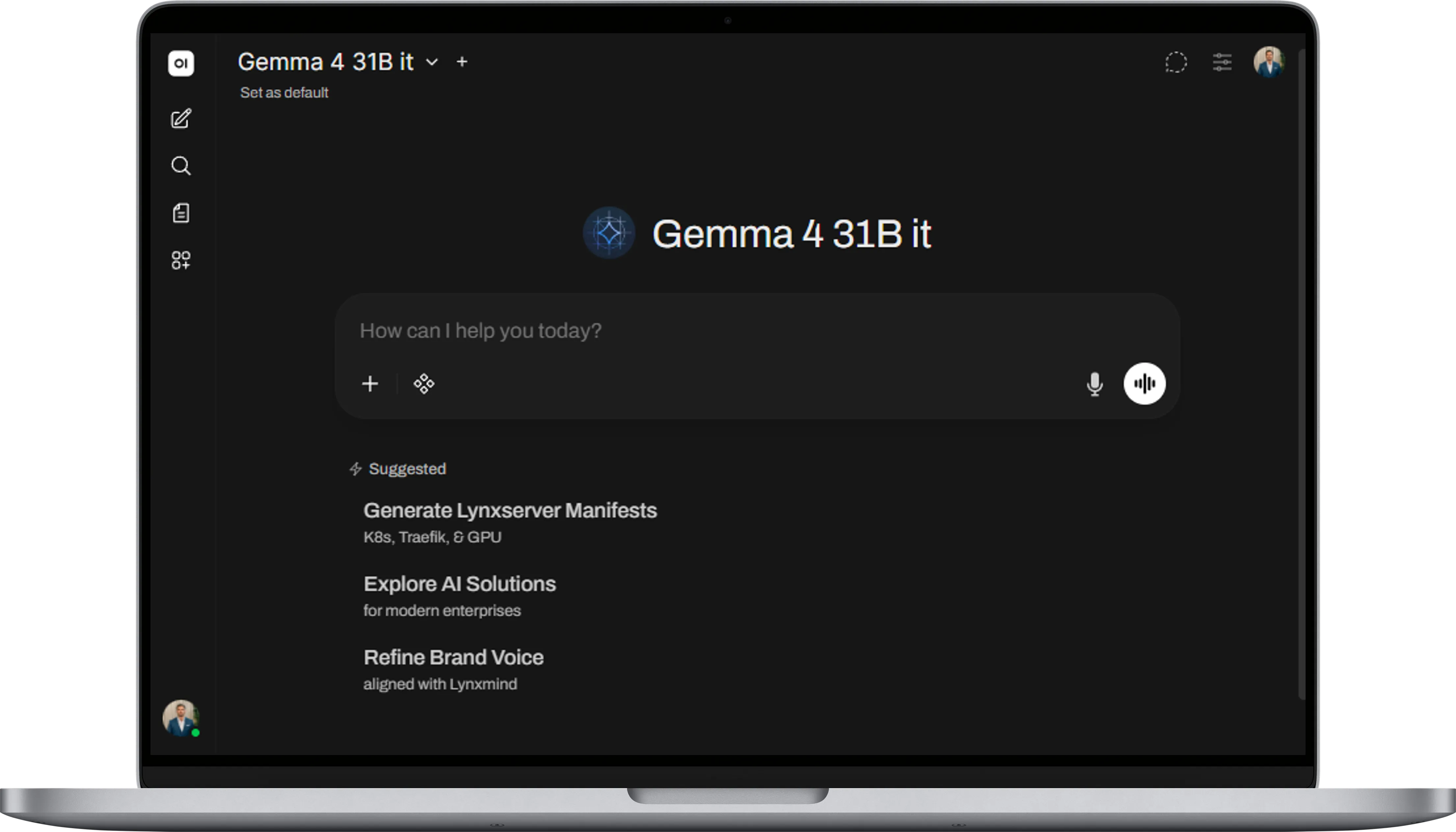

Open WebUI gives our team a clean, ChatGPT-style interface to the model. Same UX everyone already knows, pointed at our own infrastructure. Zero learning curve.

LiteLLM acts as our internal AI gateway:

· Centralised authentication with per-user virtual keys.

· Rate limits and routing across the cluster.

· Full audit trail of every request.

· Guardrails enforced at the gateway: prompt injection filters, PII checks, content policies.

It is what turns a powerful workstation into a governed platform.

The Cost Math

Across Lynxmind, AI runs constantly. OpenClaw and Hermes agents running 24/7. Guardian correlating telemetry and running multi agents pipelines. CV automation projects. n8n workflows. Invoicing OCRs. Internal experiments.

And then there is the per-developer cost. A typical Anthropic Claude license runs around 20€ per consultant, per month, before any heavy usage kicks in. Multiply that across an engineering team and it becomes a real line item, one that scales with every new hire.

Our consultants now point Claude Code in their VS Code straight at our own server’s API endpoint. Same agentic developer experience. Zero per-seat licensing. Zero source code leaving the building.

On a commercial API, the combined monthly bill becomes a line item executives notice. On our own server, the marginal cost of an additional inference is electricity.

The hardware pays for itself in months. Every prompt after that is effectively free.

We are also no longer exposed to price fluctuations from AI vendors. Intelligence will be a commodity like water or electricity but not regulated by governments. We chose to own ours.

But the deeper win is cultural: cost stops being a reason to say no. Engineers stop rationing. Consultants stop second-guessing. Innovation stops being budgeted and becomes ambient.

The Three Constraints, Restated

This is the same architecture we advocate for our enterprise clients:

· Sovereignty. Data never leaves our infrastructure.

· Cost control. No tokens. No per-seat billing.

· Privacy. Prompts, code and outputs stay on hardware we own.

What we preach, we run.

Closing

The first Lynxserver was a proof. This one is a platform.

Hardware assembled by our team. Open-weight model running locally. Open WebUI for the team, LiteLLM enforcing the guardrails. Zero outbound traffic.

At Lynxmind, AI sovereignty is not a slogan. It is a build sheet, a rack and a team that knows how to put it all together.