It is a common dilemma: modern enterprises need the efficiency of Artificial Intelligence to scale, but strict compliance and security policies block sending sensitive data to public clouds like OpenAI or Anthropic.

In this Case Study, we share how Lynxmind helped a B2B organization break this deadlock by implementing a robust automation architecture that keeps data strictly within the company’s perimeter.

The Challenge: Efficiency vs. Privacy

Our client manages critical operational processes filled with sensitive data (PII, financial records, and intellectual property). Their goal was clear: automate complex decision-making workflows.

However, traditional SaaS (Software as a Service) solutions presented unacceptable risks:

- Data exposure: Sending information to external APIs violated security policies.

- Black‑box effect: Lack of control over how data would be retained or used to train third-party models.

- Dependency: Risk of vendor lock-in on infrastructure outside the company’s control.

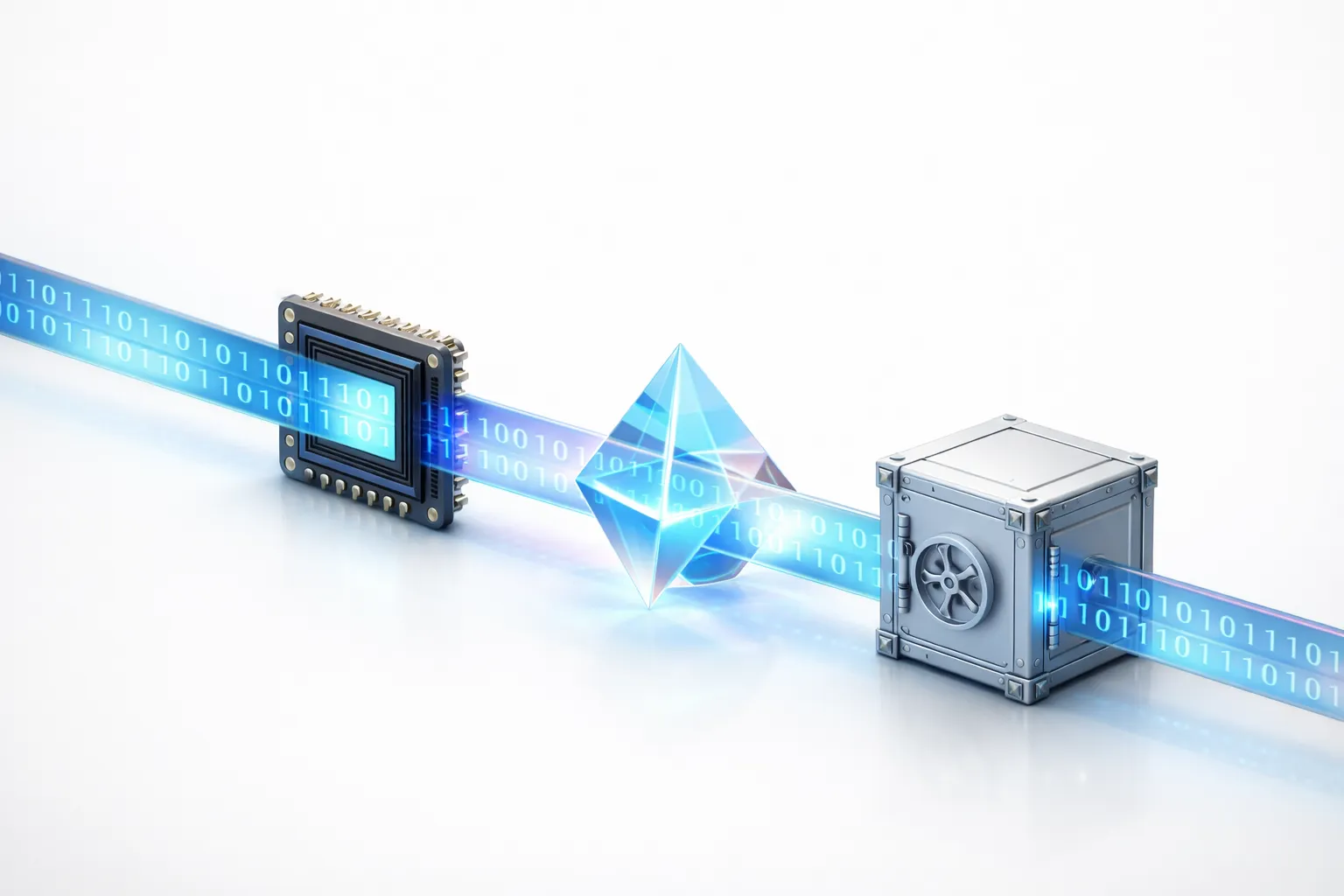

The technical challenge was to create a system that was smart as the cloud, but secure as a vault.

The Solution: A “Zero-Trust” Architecture with n8n

We designed an infrastructure where data sovereignty is priority zero. The solution relied on open-source components and controlled execution.

1. The Engine: Self‑Hosted n8n

Instead of the cloud version, we deployed n8n on self-hosted infrastructure. To further harden the environment, we strictly utilized n8n’s Official Nodes only. T These verified components undergo rigorous testing, ensuring predictable behavior and eliminating the security risks often associated with unverified community code.

This architecture ensured that workflow orchestration took place on isolated servers, without any unauthorized communication with the public internet.

2. The Brain: Self‑Hosted AI (LLMs)

We replaced GPT-5 API calls with open-source language models (such as Llama 3 or gpt-oss), running directly on the client’s infrastructure (whether on-premise or within a controlled Private Cloud).

This ensured data sovereignty, allowing for fast and secure inference without a single byte of text leaving the organization’s secure perimeter.

3. The Workflow in Action (Practical Example)

To illustrate, we created deterministic pipelines that operate as follows:

- Ingestion – The system receives raw data from internal APIs.

- Local inference – The AI model analyzes the content (e.g., risk classification or entity extraction) within the private network.

- Action – n8n forwards the decision to the destination system (ERP/CRM).

- Sanitization – After execution, memory is cleared. The generated technical logs do not contain the sensitive payload, only the operation status.

Security and Governance

We treated every workflow as a critical security component:

- External secrets management: We decoupled credential storage from the automation platform. Instead of saving API keys inside n8n, we integrated an External Secrets Manager. Credentials are injected dynamically only at runtime and never stored at rest within the n8n database.

- Least Privilege Principle: Each n8n node accesses only the strictly necessary resources.

- Auditability: The system tracks who executed what, ensuring compliance with internal audits.

The Results

The implementation proved that innovation does not have to be sacrificed for security.

- Zero Exfiltration: Complete automation of critical processes without exposure to third parties.

- Operational Reduction: Drastic decrease in repetitive manual tasks, freeing the team for strategic analysis.

- Predictability: By eliminating the latency and variability of public APIs, we achieved constant and reliable execution times.

This architecture validated a new path for enterprise automation: the ability to use powerful AI while keeping the house keys in your pocket.